Raghu Kondapalli, Vice President of Architecture, Axiado Corporation

Gopi Sirineni, President & CEO, Axiado Corporation

This year, the worldwide security spending exceeded $300 billion1. While many attacks stem from hostile inside collaborators or accidental publishing of valuable data, the great majority of the breaches result from inadequate security measures. These attacks can be avoided.

Despite all the investments to prevent breaches, the total number of attacks is increasing at an alarming rate. On average, there are 4,000 ransomware attacks per day2 (62% increase in 2020 from the previous year3), and the average cost for every breach has reached $4.24 million4. Indeed, the cumulative annual losses due to cybercrime are expected to reach $11 trillion by 20255.

While cybercriminals are persistently developing new schemes to infiltrate systems, vendors are hard at work developing new technologies that can withstand attacks. One auspicious emerging theme in this pursuit is to use machine learning (ML) technology to predict imminent attacks before they happen. This article aims to address the great promise of machine learning in pre-emptive threat mitigation and the critical importance of sharing training datasets to improve machine learning accuracy.

Machine Learning in Pre-Emptive Threat Mitigation

Cyberattacks come in many categories—data breaches, ransomware, side-channel attacks, and supply chain attacks just to name a few—each with their own unique characteristics and threat profiles. The main challenge of designing an impenetrable system is finding ways to combat threats that have countless variations of many types, each targeting a different part of the system, striking at different times, having various objectives, and coming through various ports. Another challenge is dealing with the dynamic nature of the attacks, that is, setting a defense mechanism to protect the system against known threats as well as against those that are yet to be devised.

While a robust defense mechanism against cyberattacks is essential, having the ability to detect imminent attacks and to stop them before they happen is tremendously valuable. Unusual system behavior and traffic patterns are often preludes to a breach attempt, and thus, recognition can help predict likely breaches. Fortunately, machine learning techniques have proven highly effective in behavioral modeling and anomaly detection and can be put to good use to fortify system defenses.

Before examining the role of machine learning in attack defense mechanisms, it is helpful to first understand how legacy methods work. A great majority of today’s breaches occur due to the inadequate protection the target systems have against new types of breaches. While they provide static and reactive protection against known threats, they lack proactive protection. For example, antivirus software products for personal computers maintain a catalog of known malware and viruses, but they are unable to detect new malware until their catalog is updated.

A new threat can be characterized as abnormal, out of ordinary behavior in a system. The ability to detect such abnormalities can enable a system to predict imminent attacks and take necessary actions to prevent and mitigate them.

Anomaly detection is a pervasive and well-understood machine learning use case, widely employed in many industries, for example, by financial institutions to detect fraudulent credit card activity. The wide use has laid the groundwork for deployment of machine learning for pre-emptive threat mitigation by cybersecurity vendors.

The greatest challenge for training anomaly detection models is the unbalanced nature of the datasets. In cybersecurity, systems usually behave normally, and breaches rarely happen. However, the ability to leverage machine learning for pre-emptive threat mitigation presents a formidable opportunity for security solution vendors: integrating AI engines to accommodate behavioral modeling can significantly enhance the efficacy of their products.

With properly trained models, such solutions can successfully predict a variety of breaches, which can vastly improve the overall system resiliency, whether implemented through centralized, cloud-based solutions running larger models across multiple resources or de-centralized, coprocessor-on-the-server solutions with dedicated ML hardware engines offering faster detection and response time.

Comprehensive Datasets are a Pre-Requisite for Effective Machine Learning Models

The predictive capability of an AI-enabled security processor can only be effective if it is adequately trained using large and diverse datasets that are representative of all operational modes. Fortunately, this is possible, since equipment vendors continuously collect operational data and system logs that can be transformed into datasets for model training.

GENERATING DATASETS FROM SYSTEM LOGS

Every system in a network continuously collects and stores operational data in the form of logs. Essentially, logs are timestamped records of all events in a system, including reads on the computing environment, hardware status, environmental parameters, user activity, and operating system.

Monitoring these logs is critical for security and performance as they can reveal system problems, failures, performance metrics, and breaches. System logs contain an enormous volume of data—making sense of them is a non-trivial task. Typically, specialized log monitoring software is used to decode logs to convert raw data into actionable insights. This software can be run locally or hosted by third-party companies offering a service to log monitoring. They are tasked with monitoring the uploaded system logs and alerting systems administrators (i.e., their customers) as soon as failures or unusual activities are detected.

A system log includes records about normal and abnormal operations, the latter containing the potential footprint and signatures of breaches and breach attempts. These records come in many types and at various levels of the system, including network, operating system, hypervisor, storage, security, boot-time logs, etc. Each of these logs fed into an ML system has been proven to indicate abnormalities. This makes them an excellent source for generating training data for pre-emptive threat mitigation machine learning models.

Creating Comprehensive Multi-Vendor Datasets

Datasets are system and vendor-specific, and consequently, training sets derived from them are also vendor-specific. While large vendor-specific datasets are essential for model training, they cannot guarantee a machine learning-based threat detection mechanism against diverse threats. Models trained on single-vendor datasets can only predict events experienced by a single vendor, while they ignore breach attempts experienced by other vendors. This is a severe limitation that, undoubtedly, degrades the overall value of the ML-based threat mitigation paradigm.

This limitation can be mitigated by training machine learning models with a combination of datasets amassed by multiple vendors instead of just one. The gold standard would be to have a single dataset containing the representation of all the threats experienced by all vendors, and it would be kept up to date at all times.

DIVERSE DATASETS RESULT IN SUPERIOR THREAT DETECTION

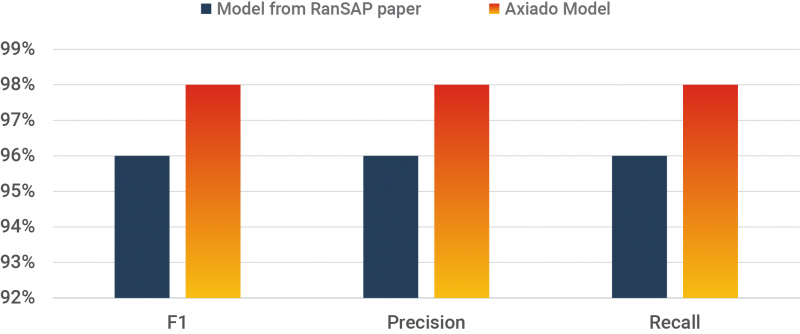

The claim above is not only intuitively apparent; formal experiments have proven that machine learning models trained on diverse datasets are far more effective in pre-emptive threat detection. For example, Axiado Corporation compared the performance of two ML models tasked with detecting ransomware attacks trained on two distinct datasets. The model trained on Axiado’s multi-vendor dataset performed significantly better than the same model trained with the RanSAP6 dataset.

In another experiment, an anomaly detection ML model, trained on a diverse USB intrusion dataset developed by Axiado, was used to distinguish between malicious and legitimate system accesses made through its USB port. Despite the remarkable performance of this model, the training dataset lacks a plethora of USB threat profiles experienced by other vendors.

Evidently, diversity in training datasets invariably leads to superior pre-emptive threat detection.

SHARING DATASETS BENEFITS MULTIPLE INDUSTRIES

Establishing a broad agreement among competing companies can often be difficult to achieve. However, when it comes to recognizing the urgency of sharing datasets, there seems to be a widespread agreement about the benefits of having access to a combined dataset for model training amongst leading security stakeholders.

For example, Derek Chamorro, Staff Security Engineer at Cloudflare sees that open collaborative innovation in end-to-end security carries significant benefits to the industry over the closed proprietary models. These benefits are particularly useful for Industry 4.0 with the integration of 5G, edge computing and AI/ML, according to Dr. Mallik Tatipamula, CTO of Ericsson, Silicon Valley.

CHALLENGES OF SHARING DATASETS

While acknowledging the merits of open collaboration is widespread, sharing datasets among companies has yet to materialize. This lack of progress is not surprising and can be attributed to various challenges.

First, vendors are concerned that their datasets can fall into the wrong hands exposing their security deficiencies. This is a valid concern since many successful cyberattacks are facilitated by hostile insiders disclosing sensitive information. Sharing datasets in the open will naturally increase the probability of such events since a wider audience can access them. Furthermore, vendors willing to share datasets might not be able to do so since there is no formal and secure mechanism to facilitate an exchange. Besides, these datasets can only be effective if they are kept up to date at all times.

Second, some vendors may have concerns about their datasets revealing trade secrets and providing competitive edge to others. For example, a dataset can reveal vendor’s primary focus on a subset of threats and not on other threats. Moreover, datasets can contain sensitive information about their end customers. Diligent care is in order to mask this information before the dataset can be shared. This task can be complicated and resource intensive.

Overcoming these hurdles can be a challenge but not impossible. Potential benefits of sharing datasets are enormous for all stakeholders, and thus, the cybersecurity community should collaboratively seek for an effective, efficient, and safe mechanism to facilitate data sharing.

Charter to Administer Secure and Orderly Sharing of Datasets

Developing a bullet-proof cybersecurity defense mechanism that can identify and thwart all cyberattacks is too big of a challenge for any single company—it must be a collective effort. In predicting breaches, machine learning models have proven to be very effective. Still, they can only be effective if trained using comprehensive and up-to-date datasets.

An independent and unbiased governing organization to facilitate a safe and orderly exchange of datasets would provide a robust logistical and legal framework for the cybersecurity industry. The organization should be funded collectively, and it should have the power to govern and enforce industry policies to be viewed as credible, trustworthy, and fair by all members.

Forming a governing organization chartered to administer a secure and orderly sharing of dataset might not be easy nor quick, but the challenges are not insurmountable. We acknowledge the severity of this issue and the urgency of addressing it. Congruently, Axiado is calling cloud service providers, OEMs, cybersecurity service operators, corporate CISOs, and government agencies to join on an industry-wide collaboration on sharing datasets in the pursuit of better cybersecurity.

References

1 Braue, D. (2021, September). Global Cybersecurity Spending to Exceed $1.75 Trillion from 2021-2025. Cybercrime Magazine.

2 Davies, V. (2021, June). 5 Ransomware Statistics You Should Know About in 2021. Cyber Magazine.

3 Statista. (2021, March). Annual Number of Ransomware Attacks Worldwide from 2016 to 2020.

4 Tunggal, A. T. (2022, June). What is the Cost of a Data Breach in 2022? UpGuard.

5 Morgan, S. (2020, November). Cybercrime to Cost the World $10.5 Trillion Annually by 2025. Cybercrime Magazine.

6 Hirano, M., Hodota, R., & Kobayashi, R. (2022). RanSAP: An open dataset of ransomware storage access patterns for training machine learning models. Forensic Science International: Digital Investigation, 40.

Authors

RAGHU KONDAPALLI

RAGHU KONDAPALLI

Vice President of Architecture, Axiado Corporation

Raghu Kondapalli has more than 25 years of system, chip, and software architecture experience with companies like Intel, LSI, Marvell and Nokia. His architectural contributions have led to production of many systems on chip (SoCs), AI/ML switches, and computing platforms. Kondapalli is passionate about driving customer-intimate innovation that leads to win-win products. He holds over 40 patents in various processing stages, and he has significantly contributed to standards in Ethernet, edge computing, cellular and data center technologies.

GOPI SIRINENI

GOPI SIRINENI

President & Chief Executive Officer, Axiado Corporation

Gopi Sirineni is the President & CEO of Axiado, spearheading transformative security technologies with AI in hardware. He is a Silicon Valley veteran with over 25 years of successes in the semiconductor, software and systems industries. His career highlights include executive positions at Marvell Semiconductor, AppliedMicro, Cloud Grapes, and Ubicom that was acquired by Qualcomm under his direction. Before joining Axiado, Gopi spent eight years as a vice president of Qualcomm’s Wired/Wireless Infrastructure business unit, where he developed a market-dominating technology Wi-Fi and Wi-Fi SON, and his pioneering foresight into distributed mesh technology created the connected, AI-based home market segment. Gopi has contributed to both Ethernet and Wi-Fi standards and chaired IEEE Project Authorization Requests (PARs).