Dr. Ashish Darbari

Founder and CEO

Axiomise

The Semiconductor Industry profile is changing dramatically. Hardware has a newfound prominence on the world stage and the industry is no longer dominated by traditional semiconductor giants. Instead, an increasing number of software companies, including tech giants Apple, Amazon, Facebook, Google, Microsoft and Tesla, not known previously to be in the chip development business, have their own dedicated hardware development teams. With the advent of open-source architectures, bringing up silicon using an open-source framework and tools is slowly becoming mainstream.

Chip design is getting easier. Functional verification is not.

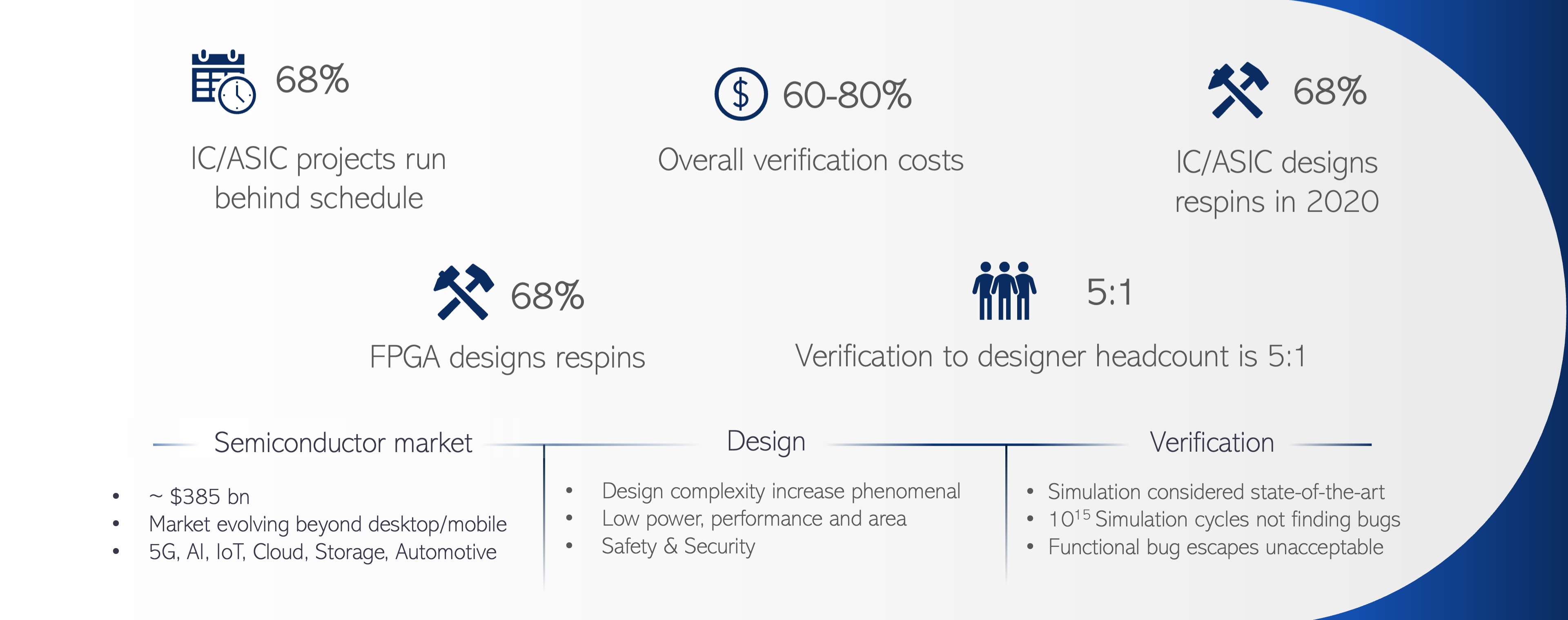

The 2020 Wilson Research Group study on functional verification reports that 83% of the FPGA and 68% of the ASIC designs fail in the first attempt. Also noteworthy, 68% of ASIC projects run behind schedule. It also pointed out that for processor development, the ratio of verification engineers to design engineers is five to one and verification is estimated to cost about 70% of the overall project costs.

Illustration #1: Rising costs, headcount, delayed project schedules and respins lead to countless semiconductor verification challenges.

Source: Axiomise

Source: 2020 Wilson Research Group and Axiomise

Imagine hiring five times more verification resources than design and the chip still needs a respin! Senior engineering managers demand effective functional verification methodologies and techniques to reduce respins and cost and accelerate time to market.

Of course, a long-held depiction of a chip design verification environment includes simulation as a foundational tool. That view is gradually shifting from simulation to emulation. Although simulation is still the dominant technology used for digital and analog design verification, emulation is deployed for system-level verification because it is faster than simulation.

Both leverage test cases and use input sequences or programs as the primary form of testing the underlying hardware. A mismatch between expected behavior and actual design behavior is flagged as a design or a test bug. Testing, however, is only capable of showing the presence of bugs not proving their absence quoted by Edsger W. Dijkstra in 1970s.

Verification is only as strong as its weakest link. The weakest link for simulation and emulation is the input sequence of the specific test case being used to validate a specific scenario. Simulation uses functional coverage to assure that enough testing has been done, though it may not be exhaustive.

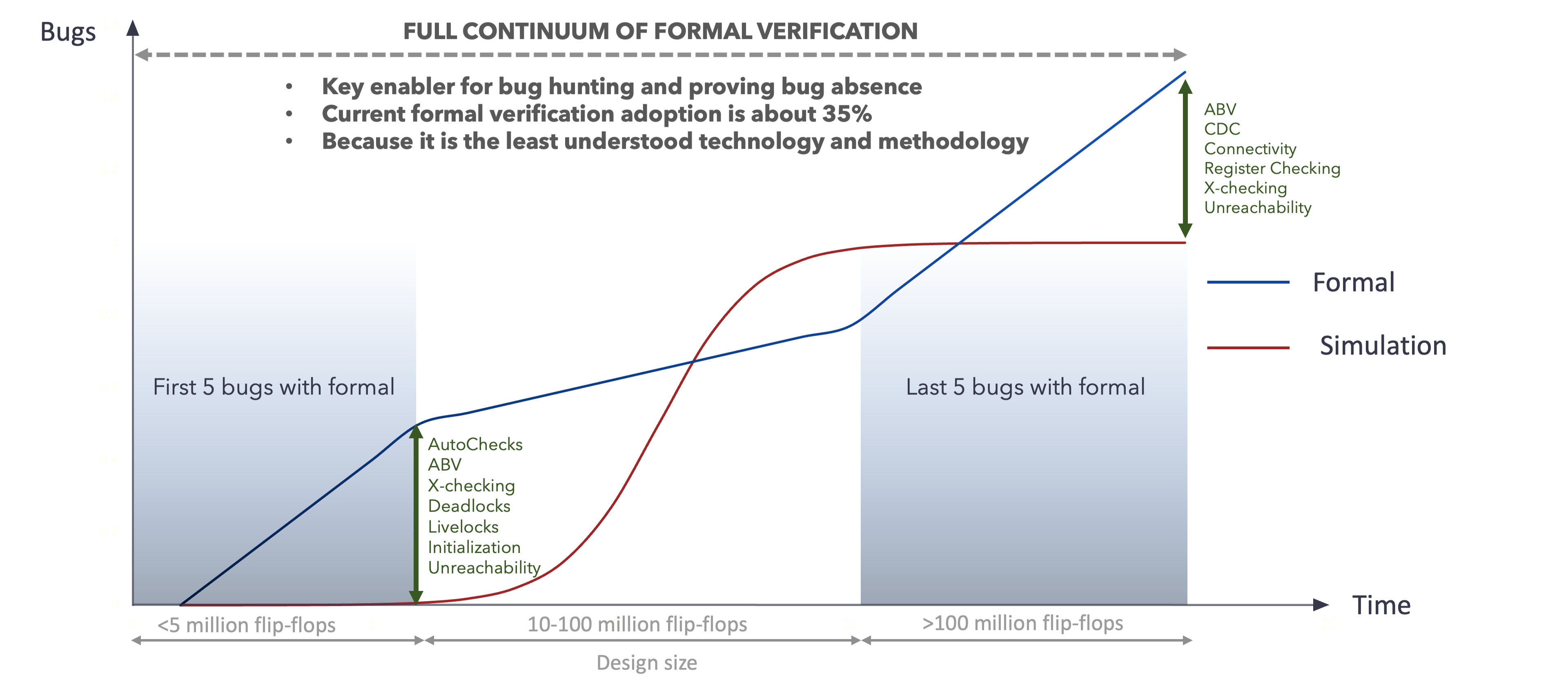

One verification methodology that may answer the demand is formal verification steeped in mathematical logic. Formal is viewed as an advantage and considered to be the only choice for many complex challenges facing verification groups.

That wasn’t always the case.

While papers on formal verification first started appearing in refereed journals and technical conferences in the 1960s, it wasn’t until the 1990s that commercial products began emerging. They weren’t market successes due to any number of reasons that could have been immature and hard-to-use products, incomplete proof results, lack of proper training and methodology. Formal verification developed a perception problem that was hard to overcome and persists today.

Despite a lack of confidence by users of the tools, a few stalwart formal experts pressed on and developed tools that could be successfully implemented in a chip design verification environment. Today, indications point to healthy, growing adoption. Still, formal methods usage in functional verification is not as effective as they could be or used consistently even with the vast resources focused on the problem.

Some insights may showcase how formal can be applied in a practical manner, all within the bounds of increasing demand, competition for hardware developers and shrinking time to market.

At its core, formal verification proves or disproves the correctness of a design’s behavior according to the specification. It verifies the quality and reliability of a chip and ensures functional verification, safety and security.

Exhaustive verification is accomplished using formal guarantees that all bugs have been found by the testbench, something that is impractical and infeasible with simulation or hardware emulation. Formal methods use a proof-centric technique, a combination of search and logical inferencing and decision making on a symbolic representation of the design. For example, formal verification tools build a mathematical model of the design and testbench to generate proof that a requirement expressed as an assertion, or a cover target is proven exhaustively.

Formal methods-based verification works on a symbolic model of the hardware and automatically abstracts the exponential complexity of the design space to build a proof of the assertion or a cover property. If the proof cannot be built, tools show a counter-example waveform just as in simulation and can be used to fix a design bug. Corner-case bugs are often found much quicker and more easily than simulation or emulation testbenches because of the intelligent way an exhaustive search is done by the formal tool.

A formal-based methodology implemented into a verification flow has the potential to be a powerful way to solve many of the chip design verification challenges. And yet, formal methods are used sparingly and for solving simple problems such as linting early in project development, or in establishing connectivity checking on a system on chip (SoC) toward the late end of the project. While bugs are effectively caught using linting and connectivity-checking apps, most functional verification is done using simulation without formal methods being considered.

In some cases, formal experts will use formal verification on one or two design blocks with no consistent adoption, methodology or reuse.

The potential of formal verification, a powerful technology with strong foundations in mathematical logic, for finding corner-case bugs and establishing proofs of bug absence throughout the project cycle remains untapped. As the simulation runs out of steam and the cost of emulation exceeds many verification budgets, implementing a formal verification methodology in a practical manner for design verification becomes more attractive.

Illustration #2: The potential of formal verification remains untapped.

Source: Axiomise

To create a meaningful impact of formal methods in the industry, it is important to create a bridge between the end-user of formal tools and the EDA tool vendor. One examples is a series of case studies showcasing best practices for formal deployment, methodology documents, training material and solving customer-specific project problems at scale efficiently.

Editor’s Note: Dr. Ashish Darbari leads Axiomise with his years of hands-on experience deploying formal at scale. Under his leadership, Axiomise takes a design, verifies it formally using a consistent methodology, and documents everything with vendor-neutral recipes. A recent example is a vendor-neutral, end-to-end formal verification solution for RISC-V and an on-demand training program.

About Ashish Darbari

Dr. Ashish Darbari has been actively using formal methods for more than two decades and is one of the foremost authorities in practical applied formal verification having trained nearly 200 designers and verification engineers globally. A keen innovator in formal verification, he is the founder and CEO of Axiomise and leads it by successfully deploying training, consulting, services and verification IP to a range of customers. Dr. Darbari has expertise in all aspects of formal methods including theorem proving, property checking and equivalence checking. He holds a Doctorate formal verification from the University of Oxford and has 44 patents in formal verification. Dr. Darbari can be reached at info@axiomise.com.