Paper Submitted by Marc Witteman, CEO, Riscure

Abstract

Hardware Fault Attacks can break software security by revealing secrets during program execution, or changing the behaviour of a program. Without profound knowledge of these attacks, it is hard to defend code effectively. Whereas traditional secure programming methods focus mostly on input validation and output control, fault resistance requires pervasive protection throughout the code. This paper explains the background and risk of fault injection, and proposes to use secure programming patterns for security critical devices. We show that repairing unprotected products suffering from fault injection vulnerabilities is hardly affordable. It is a much better strategy to use fault mitigation patterns, which help developers to lower the risk of fault injection in a cost-effective way.

1. Introduction

IoT applications affect every aspect of our lives, from in-home to automotive, health devices to critical infrastructure. Keeping these devices secure has proven to be an extremely challenging task, given the sheer volume and increasing complexity. While software vulnerabilities are numerous, there are also hardware vulnerabilities to consider. Especially hardware vulnerabilities used as a preamble to software exploitation are dangerous as these may accelerate the attack process and create new opportunities for fraud.

Hardware Fault Injection is a class of hardware attacks which have become increasingly popular, as these attacks are powerful and have a high probability of success. Most devices today are completely vulnerable against these attacks as developers have little awareness of the threat, and do not know how to protect their code.

This paper is organized as follows: first, we explain the theory and practice of fault injection. Then we introduce mitigation measures that help making software resistant. Finally, we elaborate on the cost of fixing software in various stages of the product lifecycle, and provide recommendations to mitigate the threat in a cost effective manner.

2. Fault Injection

Principle

Semiconductors are inherently sensitive to environmental variables, such as voltage, temperature and light. Computer chips contain millions of transistors, each of whom are affected by these variables. Specified ranges of environmental variables therefore bind correct operation of a chip. Typically, computer chips start to fail when operated outside these specifications. While unintentional deviations cause malfunctions that often lead to a crash, it is possible for an attack to take advantage of a well-chosen disturbance.

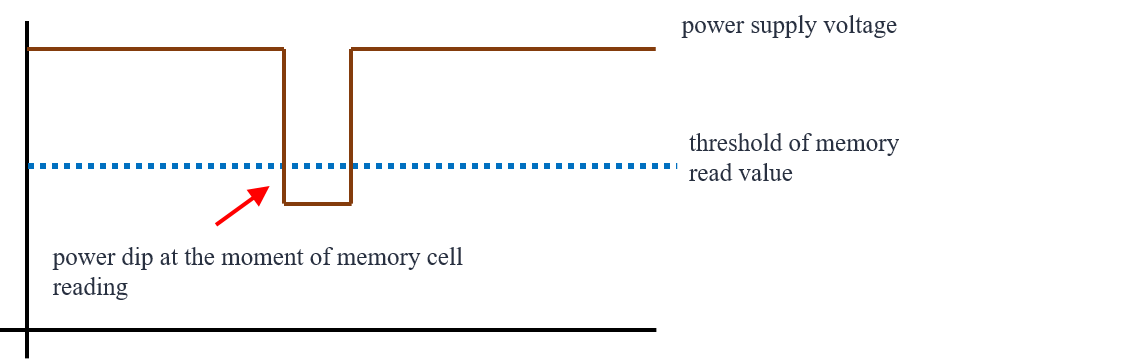

Consider the voltage glitching principle shown in Figure 1. A short dip in the power supply at the very moment of reading a memory cell may lead to the return of a wrong value. Such wrong value could influence program results or even program flow.

Figure 1: Voltage glitching principle

Methods

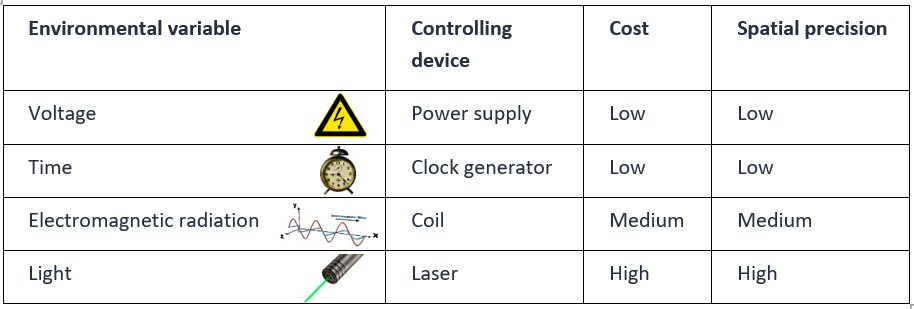

Multiple environmental variables can be manipulated, with similar effect. From the attacker perspective, it would be ideal to have control over each transistor at any time. This requires an extreme precision in time (nanoseconds) and space (nanometers). Table 1 shows the most common manipulated variables. Note that this list is not exhaustive, because some variables (e.g. temperature) lack high temporal precision.

Table 1: Environmental variables that matter

The table shows that price goes up with the spatial precision. A voltage glitch would affect all transistors simultaneously, whereas a light pulse can be directed at a very small group of transistors. A higher spatial precision provides a higher level of control to the attacker, enabling the most sophisticated attacks.

In some situations, standard lab tools suffice for fault injection, but more often, it is necessary to use dedicated test tools in advanced security test labs. The price range for attack tools ranges from $100 for the simplest to $100K for the most precise tool.

Impact

As fault injection leads to a change of a digital value in the computer chip. The consequence can be either a calculation mistake or a change in program flow. The latter is caused by changing the result of a condition, changing the termination of a loop, skipping instructions, or changing the program counter at a branch, jump or return. Since these constructs are so common, we estimate that at least one exploitable fault injection vulnerability exists for every 10 lines of critical code. That means that the vulnerability density is extremely high compared to logical security issues, which have a 100 times lower density (on average one per thousand lines of code).

Three common exploitation mechanisms are known:

- Dump

By changing memory pointers and/or loop termination, it is possible to make a chip transmit other than the intended data at any given data communication event. This way it may be possible to leak secrets, such as passwords and keys, but also a complete code base. The latter can be very useful for an attacker seeking logical vulnerabilities. - Privilege escalation

By changing critical decisions it may be possible to escalate privileges, and bypass an authentication mechanism, such as a password verification or a secure boot verification. The attacker uses this to gain unauthorized control over a device. - Key extraction

Many protected devices use cryptographic keys for security. This helps keeping data confidential and protecting integrity of a device and its data. When a computational error is introduced during a cryptographic calculation, it may be possible for an attacker to derive the secret key by comparing the corrupted result with the expected result. When the key is compromised, the device can no longer protect itself or its user.

Practical application

While fault injection creates a hurdle for many attackers who are not familiar with hardware hacking, this method rapidly gains popularity because of its power, success probability, and decreasing cost.

Many experiments and actual hacks show vulnerability of contemporary products. A few examples:

- A glitch attack on the Xbox 360 [4]

Gligli used a short voltage dip in the reset line to bring the Xbox in a special state where the memcmp function would return true, even if incorrect. This allows bypassing the secure boot verification and thus installing rogue code. The attack has been productized in a small add-on board, such that any user can apply this without in-depth knowledge. - Escalating privileges in Linux based products [6]

Niek Timmers and Cristofaro Mune discovered that with voltage glitch equipment an attacker can easily get root privilege in a Linux system. Since many products build on a Linux kernel this puts large product segments at risk. - Extracting secret keys from a Playstation Vita [5]

Yifan Lu demonstrated that cryptographic signatures in a popular gaming console can be corrupted and that cryptographic keys can be extracted using a text book Differential Fault Analysis method. - Breaking into a cryptocurrency wallet [7]

Sergei Volokitin managed to break the authentication mechanism of a hardware wallet using Electro Magnetic pulses. This method is interesting as there is no need for making any hardware modifications or connections to the device. With the result it is possible to extract all currency from the wallet without having any credentials.

3. Mitigation

Hardware Fault Injection primarily exploits hardware vulnerabilities. While these can be fixed in hardware, this is typically expensive or not an option to product developers who need to build on a given hardware platform. Fortunately, the problem can also be mitigated in software. Three strategies can (and should) be applied:

- Resist: increase code resistance such that fault are less likely to disturb program behavior.

An attacker using fault injection aims for a specific effect, e.g. changing a value or decision. Resistance can be introduced by making useful values hard to achieve (avoid 0 vs 1, but use complex values like 0xA5 or 0x3E), or by creating non-determinism making it hard to find the right timing to manipulate a decision. - Recover: code resilience to prevent insecure behavior following a fault.

Even when fault injection is successful, it is possible to make resilient code that would continue correct execution and prevent exploitation. This includes double-checking to verify value correctness and crypto results, but also double-checking conditional statements, branches, loops, and program flow. - Respond: actions to deter attackers after detecting a fault.

Immunity is hard. Especially when so many vulnerabilities exist. It is therefore important to detect fault attempts, and act accordingly. For this purpose, traps can be used, that catch fault injection attempts and subsequently disable product functionality.

These strategies are elaborated in code patterns that have the advantage that they can be repeatedly applied, without making a detailed design for each instance.

The full article, “Fault Mitigation Patterns”, can be downloaded from the Riscure website and describes a collection of fault mitigation patterns that increase fault injection robustness. These patterns typically comprise a few lines of code. Each pattern may need a small adaption to fit the specific code and resolve the associated vulnerability.

It is our experience that these patterns, if well understood, are applied efficiently at the average cost of 12 minutes per instance. Furthermore, there is typically no need to protect the entire code base: protecting the critical code is often good enough.

4. The cost of Fault Injection vulnerabilities and mitigation

Fault Injection is a threat for electronic devices that need to be secure in a hostile environment. For some products, the attacker can be even the user. Think about set-top boxes or gaming consoles. These devices restrict access to premium content, and should resist users tampering with the protection mechanisms. Other devices are frequently stolen (e.g. bankcards and phones) and need protection against criminals trying to get access to assets protected by the device.

Critical device defects are identified at different stages:

- Design

- Implementation

- Delivery (testing prior to market Introduction)

- Deployment (issues detected in the field are followed by maintenance)

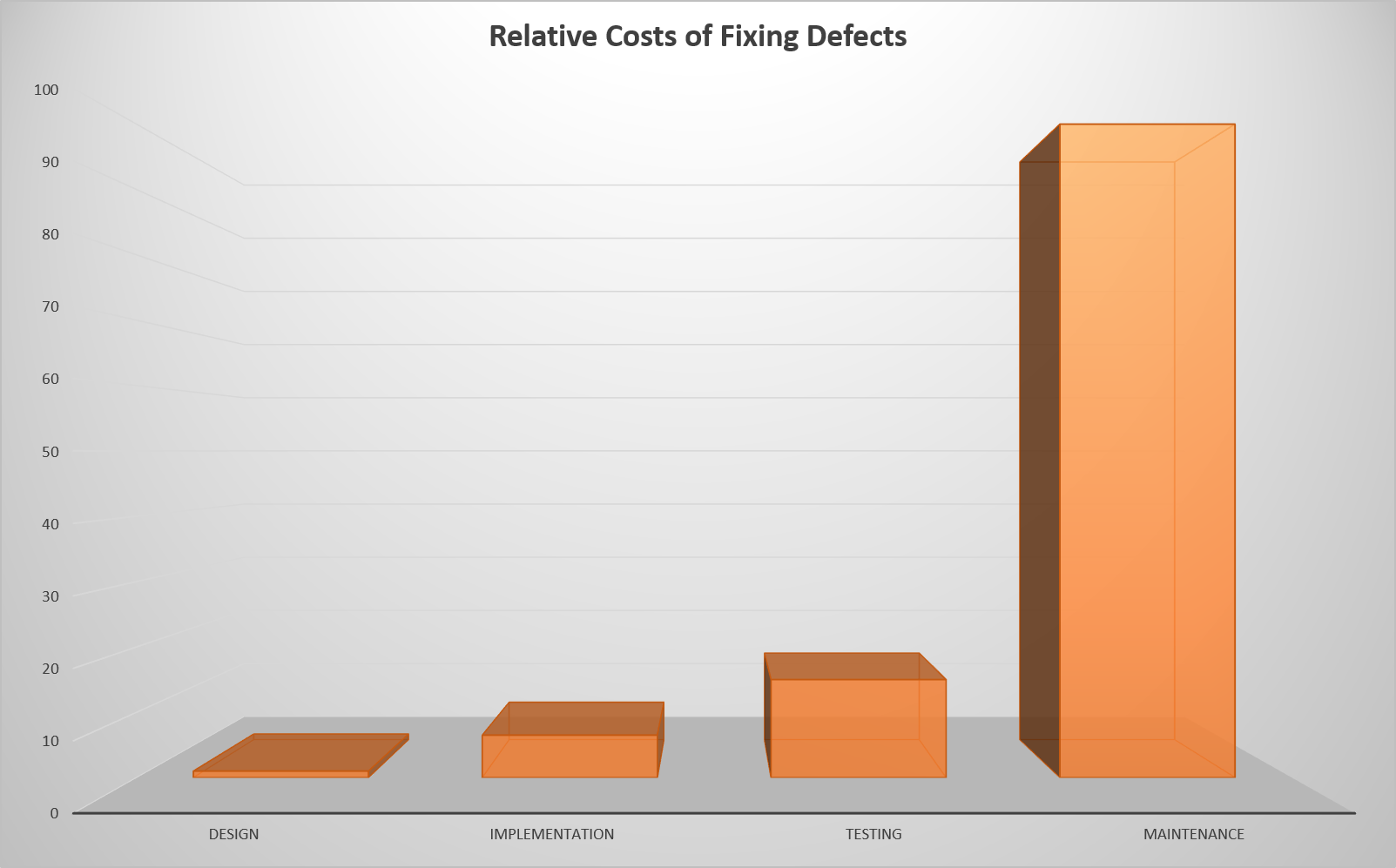

An IBM study showed that the relative cost of fixing defects grows fast over time (Figure 2). While this strong increase may initially seem excessive, this is actually not hard to imagine: compare fixing a mistake you made yesterday to analyzing and resolving the bug your previous (and not-so-brilliant) coworker made last year.

Figure 2: IBM System Science Institute, Relative Cost of Fixing Defects

In addition to repair cost of products broken in the field, there are also costs associated with brand damage (reduced public trust in product or vendor), and (fraud related) damage or penalties (cost caused by malfunction in the field).

A sad but illustrative example of mounting cost for late-identified issues can be found in the impact of the 737 MAX software bug for Boeing. In March 2019, the airliner was grounded following two dramatic crashes. Over the next 6 months, Boeing lost 17% of market cap value (more than 40B value evaporated). CNN estimates that the total incident cost for the company reached $18.7B in a year time [8].

While the actual cost for late fixing (after problems surfaced in the field) varies, and is often higher, we take the IBM estimation of a 100 fold increase compared to early addressing in the design phase as a reference factor in our analysis. This will help comparing cost for resolving fault related security issues.

We learned that applying a Fault Mitigation Pattern costs a trained developer on average 12 minutes. We base this on our experience of training developers, and observing the speed of applying this knowledge. For repairing code that appeared to be flawed in the field, we follow the 100-fold factor proposed by IBM, thereby assuming that each vulnerability will cost 20 hrs to fix in maintenance.

To study the cost of bug fixing, we consider a hypothetical IoT product development case. We base our estimates on the following assumptions:

- An average IoT product has 100 KLOC, but only 5% = 5 KLOC critical code

- Developing a 100 KLOC product costs 5 man-years of work ≈ 9 k hrs

- A developer costs $100 per hour

- Total development cost are: $ 900 K

Now, let us compare the two extremes: design phase vs deployment phase:

- Design Phase: Applying fault mitigation patterns

- 80 hrs training ≈ 20k training cost

- 12 minutes per pattern instance

- 5 KLOC critical code à 500 pattern instances ≈ 100 hrs = $ 10 K

- Total cost: $ 30 K

- Deployment Phase: Fixing bugs found in the field

- 20 hrs analysis and fixing time per vulnerability (conform IBM study)

- 5 KLOC critical code à 500 vulnerability fixes ≈ 10,000 hrs

- Total cost: 10,000 hrs ≈ $ 1 M

This example shows that avoiding FI vulnerabilities may cost you only 3% extra in your development cost, whereas fixing the product after a problem surfaces in the field may cost you 110% of the original development cost. The latter choice may be cost-prohibitive, and kill the product.

5. Conclusion

Fault Injection is a growing threat to devices that need to be secure in the field. While the problem originates in hardware, it manifests itself in software, allowing attackers to break product security in a variety of ways. Over the past years several examples have been reported of products broken in the field by means of FI.

Since the amount of FI vulnerabilities in software is overwhelming, there is a need for a systematic mitigation approach. We propose to use Fault Mitigation Patterns, a set of generic countermeasures that can be applied throughout the code, and require little adaption for repeated application.

While the mitigation cost during product development may be limited to a mere 3% of the total development cost, this is expected to go up to more than 100% in case an unprotected product needs to be repaired after FI vulnerabilities surfaced in the field.

We strongly recommend IoT developers to seek increased resistance by applying fault mitigation patterns.

6. References

[1] Bilgiday Yuce, Patrick Schaumont, Marc Witteman, Fault Attacks on Secure Embedded Software: Threats, Design and Evaluation, https://arxiv.org/pdf/2003.10513.pdf

[2] Niek Timmers, Albert Spruyt, Marc Witteman, Controlling PC on ARM using Fault Injection, https://www.riscure.com/publication/controlling-pc-arm-using-fault-injection/

[3] Niek Timmers, Albert Spruyt, Bypassing Secure Boot using Fault Injection, https://www.riscure.com/publication/bypassing-secure-boot-using-fault-injection/

[4] Gligli, Reset glitch hack, http://gligli360.blogspot.com/2011/08/glitched.html

[5] Yifan Lu, Attacking Hardware AES with DFA, https://yifan.lu/images/2019/02/Attacking_Hardware_AES_with_DFA.pdf

[6] Niek Timmers, Cristofaro Mune, Escalating Privileges in Linux using Voltage Fault Injection, https://www.riscure.com/uploads/2017/10/Riscure_Whitepaper_Escalating_Privileges_in_Linux_using_Fault_Injection.pdf

[7] Sergei Volokitin, Glitching the KeepKey hardware wallet, https://www.riscure.com/blog/glitching-the-keepkey-hardware-wallet

[8] CNN, The cost of the Boeing 737 Max crisis: $18.7 billion and counting

https://edition.cnn.com/2020/03/10/business/boeing-737-max-cost/index.html

_________________________________________________________________

About Marc Witteman

About Marc Witteman

Marc Witteman has a long track record in the security industry. He has been involved with a variety of security projects for over three decades and worked on applications in mobile communications, payment industry, identification, and pay television. Recent work includes secure programming and mobile payment security issues.

He has authored several articles on embedded device security issues and he has extensive experience as a trainer, lecturing security topics for audiences ranging from novices to experts. As a security analyst he co-developed several tools for testing hardware and software security.

Marc has an MSc in Electrical Engineering from the Delft University of Technology in the Netherlands. From 1989 till 2001 he worked for several telecom operators, the ETSI standardization body and a government lab.

In 2001 he founded Riscure, and in almost two decades he developed the company into a world renowned security test lab and security test tool vendor. In 2010 Marc started Riscure Inc, the US branch of Riscure, based in San Francisco, and in 2017 he added a branch office in Shanghai. Being a technical entrepreneur, he holds both the CEO and CTO role at Riscure.